From the lineage of Unix and Plan 9 comes the future

Safe agents are

free agents.

InferNode is a lightweight operating system with mathematically proven isolation of AI agents. When an agent can only see what you grant, you can trust it to do more—and the human-AI team finally works.

See it in action

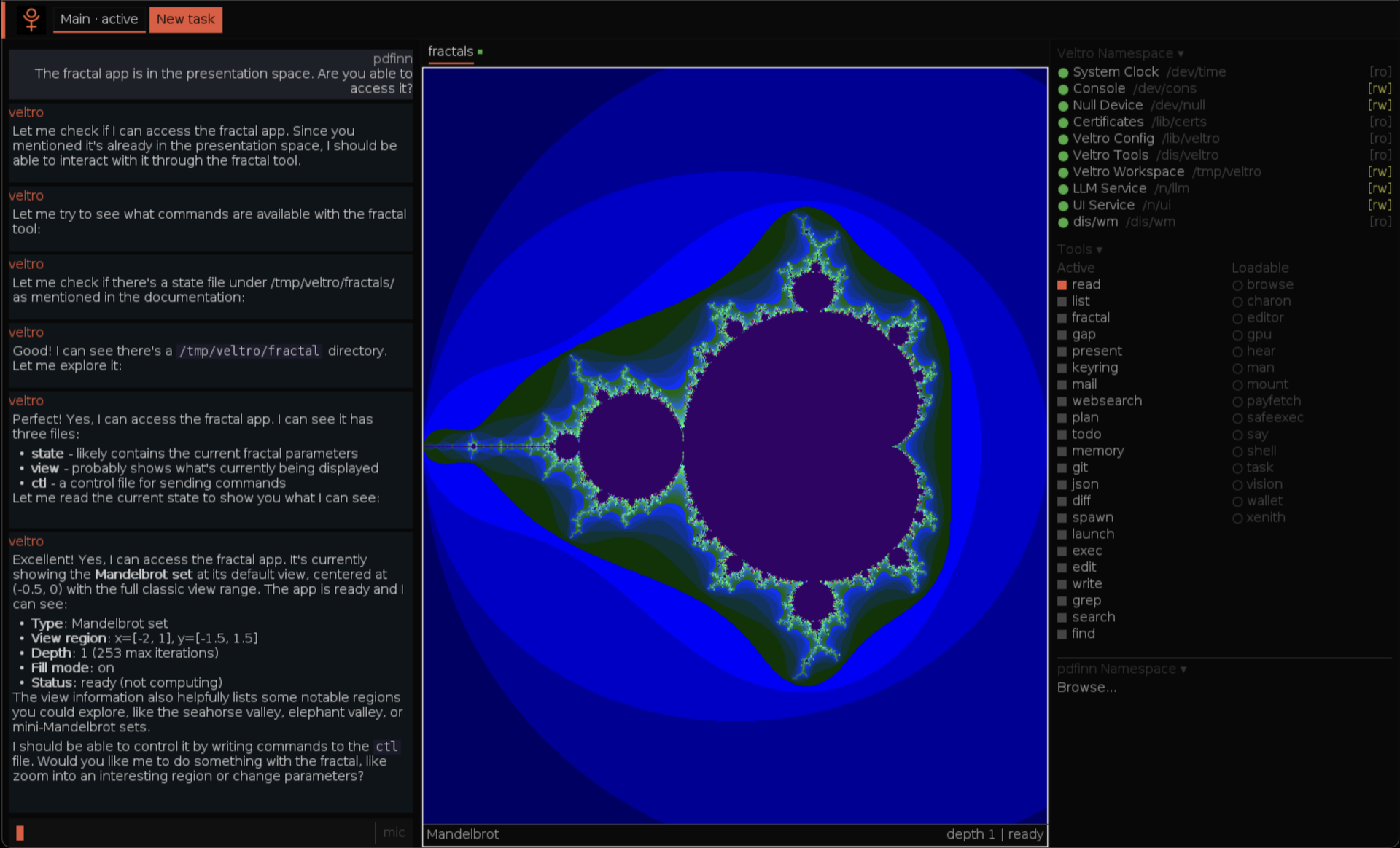

You and your agent, side by side.

Veltro runs applications, composes tools, and acts on your behalf—all within a namespace you control. You see everything it sees. It touches nothing you haven’t granted.

The Problem

AI agents are powerful.

That's the problem.

An AI agent that can read your files, call APIs, and spawn sub-processes is extraordinarily useful. It is also extraordinarily dangerous when it shares your operating system, your credentials, and your network access.

Today's answer is guardrails: sandboxes bolted on top, permission dialogs, policy layers you hope hold. The agent runs inside your world and you trust the cage. History suggests this is unwise.

InferNode inverts the model entirely.

Each agent gets its own namespace—a view of the world constructed from only the resources you explicitly mount. There is no cage because there is nothing to cage. The agent cannot access your credentials, your email, or your SSH keys because in its namespace, those things do not exist.

This changes everything. A provably contained agent is an agent you can trust with real autonomy. More autonomy means more productivity. And that is the point: security is not a constraint on the human-AI team. It is the foundation that makes the team possible.

Secure

Each AI agent runs in its own isolated namespace. It can only see what you explicitly grant. This is not a policy layer—it is how the operating system works, proven with formal verification.

Sovereign

You choose where your data lives and where your compute runs. Cloud, edge, local, or all three at once. No vendor decides for you.

Composable

Every resource—a GPU, an LLM, a database, a sensor—appears as a file. Plug anything into anything, across any device, with a single command.

Open

MIT licensed. Read the source, modify it, deploy it, sell it. No contributor license agreements. No license rug-pulls. No strings.

Capability-Based Security

If you didn't grant it,

it doesn't exist.

Today, most agent frameworks give you two choices. Run the agent on your own machine—with your credentials, your network, and your data—and hope the guardrails hold. Or hand everything to a cloud platform that isolates the agent by moving your data onto someone else's computer. One trusts the agent too much. The other trusts the cloud too much.

InferNode works differently. Each agent gets its own namespace—a view of the world constructed from only the resources you explicitly share. Try to access something you weren't given? It doesn't error. It simply does not exist. There is no attack surface because there is no surface.

This isn't a claim. The isolation model has been formally verified across three independent tools—TLA+, SPIN, and CBMC: 11 invariants proven across 19.3 million states (exhaustive), 3.17 billion states in an extended run, and 113 CBMC assertions—zero violations. Verification runs automatically in CI on every push.

Because agents are provably contained, you can give them more freedom—more tools, more data, more autonomy—without more risk. Safe agents are productive agents.

Full verification report →Quantum-Safe Cryptography

experimentalInferNode ships preliminary implementations of ML-KEM (FIPS 203) and ML-DSA (FIPS 204)—the NIST-standardised, lattice-based algorithms the industry is migrating to now. Both NIST Level 3 (ML-KEM-768 / ML-DSA-65) and Level 5 (ML-KEM-1024 / ML-DSA-87) parameter sets are supported, with constant-time arithmetic throughout.

This work is not yet recommended for production, but cryptographic agility is a first-class design goal. When classical public-key cryptography is broken, InferNode will already have a path—running natively on the same lightweight OS that protects your agents today.

Why Now

Three trends are converging.

InferNode exists at the intersection of shifts that are making secure, distributed AI not just possible—but inevitable.

Trust needs proof, not promises.

As AI agents gain autonomy, “we tested it” is no longer enough. Prompt injection, confused deputies, privilege escalation—the attack surface grows with every capability you grant. Regulated industries are starting to require formal verification. InferNode’s isolation model is proven with TLA+, SPIN, and CBMC. The math exists. Ask for it.

Agents need a secure runtime.

Every major platform is racing to build multi-agent systems—LangGraph, CrewAI, OpenAI Agents, AWS Strands. But they all run agents with ambient authority inside your OS. Nobody has solved the fundamental problem: how do you let an agent do real work without giving it the keys to everything? InferNode’s namespaces are that answer.

Local inference just got real.

Small language models can now handle the majority of real-world queries on local hardware. Efficiency is improving at a staggering rate. The assumption that useful AI requires a cloud API is no longer true—and when your AI runs locally, you need an OS designed for it, not a browser tab.

A note on footprint: InferNode’s base runtime starts at ~15 MB RAM with a 2-second cold start. Real-world workloads—multiple agents, graphics, large models—will use more, as with any system. The point isn’t that it stays at 15 MB. It’s that it starts there, so it can run on hardware where heavier runtimes can’t even boot.

The Computer Is the Network

Your devices. One namespace. No cloud required.

Every resource—a GPU, a sensor, an LLM, a database—appears as a file. Mount it from anywhere. Your AI agent sees a single, seamless workspace.

Mount anything

A GPU cluster, a local LLM, a sensor feed, a cloud API—all appear as files. One command: mount tcp!host!port /n/resource

Agents see files, not APIs

Your AI agent reads and writes files. It doesn't need SDKs, API keys, or OAuth tokens. If a resource is mounted, the agent can use it.

Cloud is a peer, not a master

Connect to cloud services when you want. Disconnect when you don't. Your namespace works either way. No dependency. No lock-in.

Distributed by Design

Code moves. Apps stretch. Nothing restarts.

In InferNode, software isn’t installed—it’s mounted. Applications don’t run “on a machine”—they run across your namespace. These aren’t abstractions. This is how the OS works.

Hot-load code over the network

For developers

Compile a module on your laptop. Every device on your network can load and run it immediately—no redeploy, no container push, no deployment pipeline. Update it once, and every device picks up the change on next load.

In practice

Push a new ML preprocessing filter to a fleet of edge sensors. Roll out a security patch to every node in your mesh. Add a capability to an AI agent across all devices. All without downtime.

Run there. See here.

For developers

Applications export their interfaces as files over 9P. Mount a remote app’s namespace locally and interact with it as if it were running on your machine. The network boundary disappears at the OS level.

In practice

Run LLM inference on a GPU server in the closet, interact from your laptop. Analyse a dataset on a powerful remote machine, see results on a tablet in the field. One namespace. The hardware is irrelevant.

The Interface

One protocol. Any language.

Inside InferNode, every resource is a file—you interact with LLMs, sensors, and agents using open(), read(), write(). From the outside, any language that speaks 9P—a simple, open protocol—can connect to InferNode and work with its resources directly. No vendor SDK. No API wrapper. Just a well-documented protocol and the language you already know.

Build a service. It becomes native.

Write a service in any language. Expose it via 9P. It becomes a file in the namespace—indistinguishable from a built-in resource. Other agents, tools, and users can mount and use it immediately. The filesystem is the universal contract.

The System

One secure OS. Use it your way.

InferNode is the foundation—provably isolated namespaces for every agent, on any hardware. On top of it, choose the interface that fits how you work. Or use no interface at all.

Veltro

The autonomous agent

Veltro is an AI agent that lives in a namespace, not inside an application. It reads files, invokes tools, queries LLMs, and spawns sub-agents—all within the boundaries you define. Headless or interactive. Your choice.

/n/llm Lucia

Voice-first AI workspace

Lucia replaces the desktop with a conversational AI environment. Talk to your computer. The agent listens, acts, and presents results—documents, data, visualisations—without you ever opening an application. Security you can trust means an experience you can rely on.

Xenith

Developer power tool

A text environment built for developers and AI agents working side by side. Forked from Acme with async I/O, dark mode, and a 9P interface that lets agents interact through file operations—no SDK required. Every agent action is observable in real time.

Veltro

Built for autonomous work.

An AI agent that lives in a namespace, not inside an application. Every capability is a file. Every boundary is structural.

Namespace-Isolated

Each Veltro instance sees only the resources you mount into its namespace. No ambient authority, no inherited credentials, no attack surface. Isolation is not enforced by policy—it is structural.

Sub-Agents

Veltro can spawn child agents with a subset of its own capabilities. Each sub-agent gets a further-restricted namespace. Delegation without escalation—the principle of least privilege, applied recursively.

LLM as Filesystem

Mount any language model at /n/llm via llm9p. Write a prompt to /n/llm/0/ask, read the completion back. Claude, Ollama, any backend—the agent sees files, not APIs. Swap providers without changing a line of code.

Multi-Device

Veltro runs on a Raspberry Pi, a Jetson, a laptop, or a server. Mount resources from any device on the network into the agent’s namespace using 9P. The agent doesn’t know or care where the data lives—it just works.

Use Cases

Built for people who can't afford to hope

the guardrails hold.

Agent Builders

Stop worrying about what your agent can access.

Building autonomous agents is terrifying when they share your OS. InferNode gives each agent an isolated namespace with only the tools and data you grant. Prompt injection? The agent literally cannot see anything outside its namespace. There is no attack surface.

Industrial & Critical Infrastructure

Formally verified. Not just tested.

Factory floors, energy grids, water systems—environments where a misbehaving AI agent isn’t an inconvenience, it’s a safety incident. InferNode’s isolation model is formally verified. Show your auditors the math, not a promise.

Finance, Legal & Healthcare

Use AI without sending your data somewhere else.

Trading strategies, client records, patient data, legal discovery—too sensitive for someone else’s cloud. InferNode runs on your hardware with local LLMs. Formally verified isolation means you can prove containment to regulators and auditors, not just promise it.

Edge & Embedded

15 MB. A $35 board. Real AI.

Kubernetes on a Raspberry Pi is a bad joke. InferNode runs in 15 MB of RAM with a 2-second cold start. Deploy AI agents on Jetsons, Raspberry Pis, or any ARM64/AMD64 device—each in its own isolated namespace. With JIT compilation, it’s fast too.

Mission-Critical Operations

Works where the cloud doesn’t.

Disconnected environments, austere conditions, contested networks—places where cloud APIs are a liability, not a luxury. InferNode runs entirely on local hardware, meshes devices peer-to-peer, and keeps working when the uplink goes down. Every agent stays contained.

Distributed Systems

Your devices, working as one.

Mount a GPU from across the room or across the country. InferNode’s 9P protocol makes remote resources appear as local files. Build a mesh of devices that share compute, storage, and sensors—each node isolated, the whole system composable.

Quick Start

Launch the app or build from source.

On macOS, download and run. On Linux, download the GUI or headless tarball, or build from source. Either way—under a minute.